It is possible to do that with one camlocate node and snapshot position defined in installation menu.

Using the script command snapshot_position offset=p[0,0,Z,0,0,0] you can offset the object detection plan of Z m.

You can for example make the calibration of the camera on the first layer and than offset it of the height of a layer to detect object on next layer.

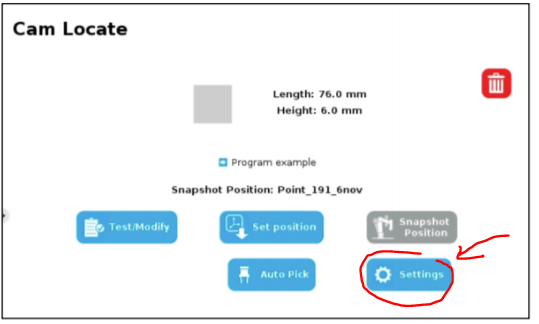

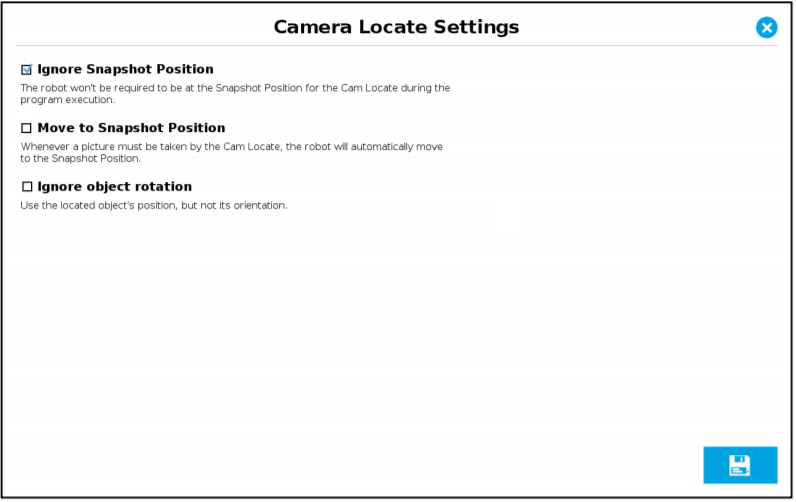

You can also change camlocate node option to enter the camlocate from any position (In previous version of the camera URCAP the script command ignore_snapshot_position=True was used but it is now included in the camlocate node). You can take the picture from distance or close location, even taking a picture with a different camera orientation should work.

You can first take a picture from fare, then move the robot closer to the object and then take a second picture and pick it.

We often use this kind of double detection to increase the precision of the positioning.

I imagine that with the amount of layer you may have some variation of the height of objects. You could eventually find the position of the top surface of object using force sensing function like find surface ( the robot goes down until it sense a force). When the robot is on the surface, you can get the Z position of the TCP with the command get_actual_tcp_pose().

You can have a look to the following DoF post which demonstrate the use of snapshot_position offset=p[0,0,Z,0,0,0] and ignore_snapshot_position=True (now this option is available in the node).

https://dof.robotiq.com/discussion/1430/camera-detect-object-on-various-plane-and-from-various-camera-location#latest

The Dof Community was shut down in June 2023. This is a read-only archive.

If you have questions about Robotiq products please reach our support team.

If you have questions about Robotiq products please reach our support team.

bcastets

bcastets

Hello everyone,

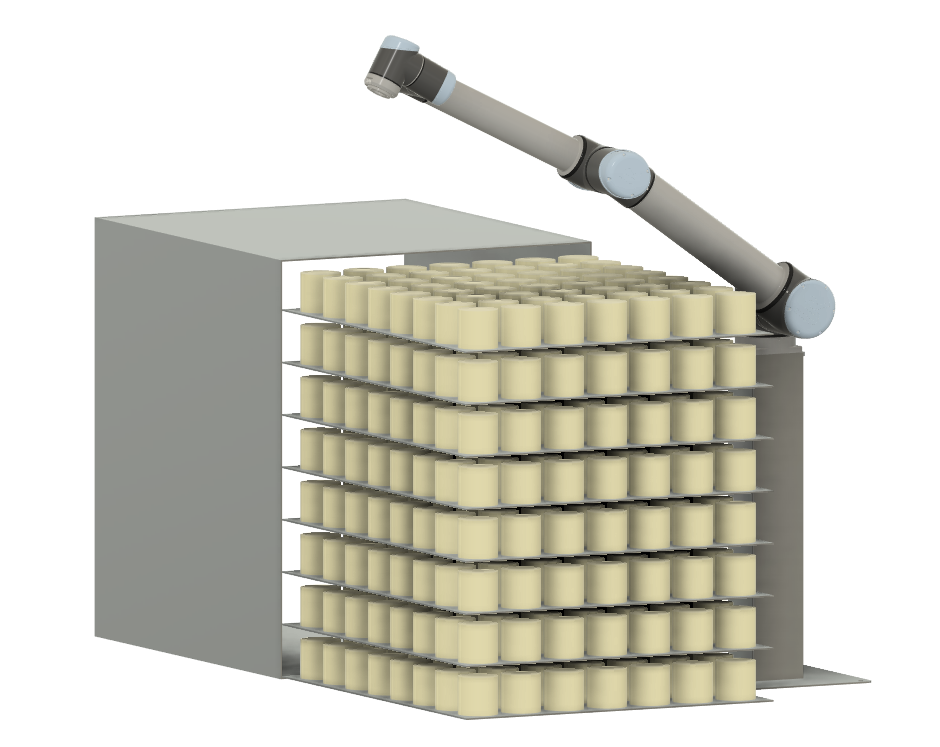

I am working with a customer that wants to pick up multiple cylinder objects on trays (UR10), with 56 objects on every tray (see picture). As it is important that the objects are picked up in the center of the cylinder, I want to use vision to find it. My guess is that it won't be enough to take one picture of the whole tray from above to find all objects, since the shape of the cylinder will change with an angle.

Instead my idea is to move above each object individually and take a picture to get a more accurate position. What would be the best way to program this? Would I have to have a Cam Locate for all 7x8x8=448 positions or is it possible to include the Cam Locate in a palletizing-loop?

The method of picking up the cylinders in not definite and this is a suggestion from the customer, but the goal is to avoid having to restock the cylinders more than once per hour.

Cheers,

Tor

UR integrator in Sweden