@Vincent @Nicolas_Lauzier @Vincent_Paquin @JeanPhilippe_Mercier : have a look at this!

Samuel_Bouchard

Samuel_Bouchard

Alexandre_Pare

Alexandre_Pare

@xamla_andreas : this is a really nice product. I like the design. Can't wait to see other videos

Nicolas_Lauzier

Nicolas_Lauzier

This is amazing! Keep on the good work Andreas. I'm looking forward to see everything that people will do with such a nice integration!

Catherine_Bernier

Catherine_Bernier

@xamla_andreas Thanks a lot for your great demos.

I just noticed that when the gripper do not grab an object, the robot still proceed as if. It would be interesting to use the built-in feature of the gripper for object detection.

Here is a support video we made this week: Click Here!

Let me know what you think.

xamla_andreas

xamla_andreas

We released the xamla_egomo ros package on github. This repo includes all 3D models to print the parts of an Egomo sensor-head and the software to operate RGB + depth camera nodes + Robotiq 2-Finger-85 Gripper + Robotiq FT-300 sensor wirelessly. It also comes with lua client tools and the source code of the table clearing and duplo stacking demos. A high-speed booting RPi 3 linux image with ros will be released during the next days (also very handy for all kinds of ROS projects with the RPi that need a read-only file system). If you are interested in getting a pre-assembled Egomo device or the Xamla IO-Board for your adaptive robotics experiments please contact me. :-)

dhenderson

dhenderson

matthewd92

matthewd92

Hi fellow roboticists,

My name is Andreas and I am co-founder of the adaptive robotics start-up Xamla from Münster, Germany. We develop hard- and software for vision and force-torque controlled applications. For the combination UR5 + Robotiq force-torque sensor and gripper we are currently developing an open-source wireless (and low-cost) sensor head named "egomo".

The main goal of egomo is to give researches and developers a quick-start into the field of adaptive robotics. We try to lower many of the hurdles normally faced today, like high hardware costs, long time for integration, training, adapting, etc.

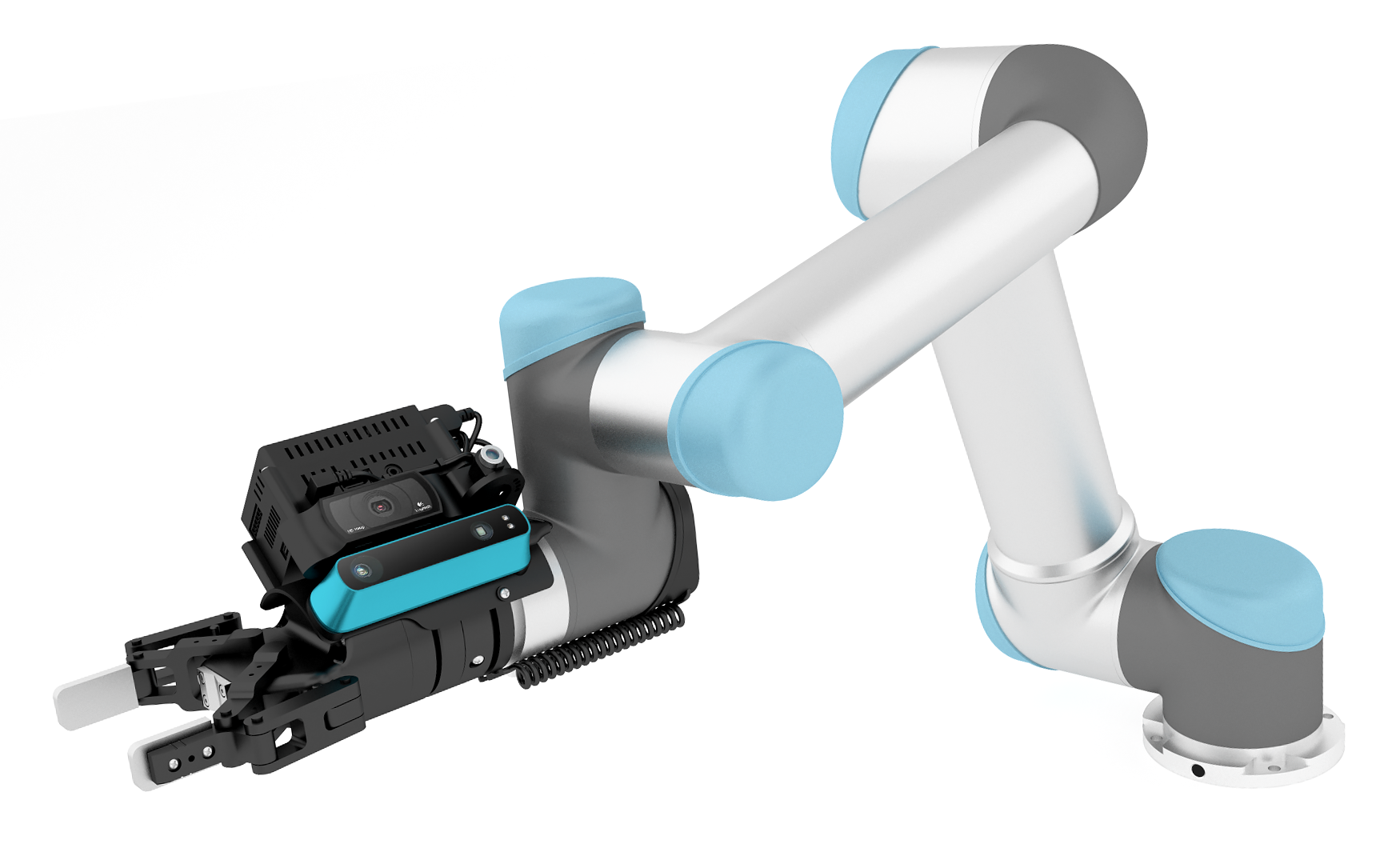

Here is a visualization of the sensor head mounted to a UR5:

Our first iteration of egomo is designed to work on a UR5 in combination with a Robotiq 2-finger-85 gripper, and optionally the Robotiq FT-300 force-torque sensor. Both UR and Robotiq have made affordability, flexibility and ease of integration part of their key features, so it was a perfect platform for us to start developing for!

With its Logitech C920 HD webcam, the Structure IO Depth Sensor, and an IR line laser diode, "egomo" can create 2D & 3D images of the surroundings. In addition to the Robotiq FT-Sensor we have a IMU to capture acceleration data on our custom IO board which also talks directly via MOD-Bus to the Robotiq components. A Raspberry PI3 with Ubuntu and ROS installed on it is used as the wireless relay station between sensors and an external controller PC (with GPU). All software parts, e.g. the SD-card image and ROS-nodes will be made available open-source in the time frame of Automatica 2016.

You can find a full list of egomo's technical specs on this website, and if you have any questions about specific parts, techniques used, etc., please feel free to ask!

If you want to see egomo in action, we posted this simple Pick & Place demo (finding, gripping, and stacking duplo bricks) a couple of days ago. The pose of the Duplo bricks is first coarsely measured using a point cloud obtained from a Structure IO depth sensor and subsequently refined using high-resolution stereo triangulation (the demo is running through the steps slowly for presentation purposes, we'll turn up the speed a bit for future videos).

By designing the sensor head to communicate wirelessly, we retain most of the movement potential of the robot arm – something that can quickly become a problem with standard cables. From earlier feedback we know that wireless connections are not acceptable in (some) industrial settings - for such cases it is possible to connect the Egomo sesorhead with a single high-quality Ethernet-cable (which is still better than having 4+ cables).

We are working tirelessly towards getting ready for the initial release, in the meantime we would very much welcome any constructive feedback, questions, ideas, etc. Feel free to post here, or send me an email (andreas.koepf@xamla.com). We are also looking for Beta Testers (who have some experience with ROS) – If you would like to apply, please get in touch with me via email.

Thank you very much, and looking forward to hearing your feedback!

Andreas